1 | AI-generated image of Donald Trump amid supporters during the 2024 campaign.

Introduction

In recent years, public discourse has filled with labels such as fake news, post-truth, and alternative facts. These terms signal not only the circulation of false content, but a deeper transformation in the epistemic ecology of political communication. Within this framework, many communicative practices aim less to establish what is true than to render the very criteria of verification ineffective, irrelevant, or suspect (Keyes 2004; McIntyre 2018). Moreover, the political competition shifts communication from the terrain of evidence to that of belonging, where the validity of a claim depends on its identity-based and affective function. These are controversies about images as well: pictures are digitally enhanced or modified, misattributed, or outright artificially generated.

In this setting we are witnessing an epistemic corrosion: a growing difficulty in maintaining an ordered public discourse in which the circulation of information is regulated by shared criteria of empirical validity and by stable relations of trust with sources. The problem is not only that clearly false items circulate. It is that we increasingly live in borderline conditions in which the same sign, text, or image can be pragmatically used in more than one way, and its status is left intentionally, or tacitly, undecided. The same content can oscillate between truth-apt reporting and fiction; between seriousness and parody; between mere “illustration” and evidential demonstration. In parallel, trust in sources is eroded in two complementary ways: by openly contesting the authority of institutions built around verification (journalism, courts, historical scholarship, science), and by blurring the distinction between accountable sources and ideologically driven outlets. Digital circulation and the rapid development of generative AI add a further pressure: they make the origin and causal production of images harder to assess (Wardle & Derakhshan 2017; Chesney, Citron 2019a).

A striking symptom of this condition that builds the premise of this paper is the ambivalent fact that images that are clearly misattributed, manipulated or explicitly recognized as artificial can still be processed as evidence-like by part of their recipient base. One way to explain this is to look at the recipient side of image reception and interpretation. In fact, in politically polarized contexts, images insert themselves into an epistemic environment that can be described as tribalized: a setting in which informational items are primarily evaluated as tokens of affiliation and instruments of orientation. Within such an environment, images tend to be, on one side, rapidly typified as instances of familiar narratives (“This is what they are like”, “This is what is happening”) or transformed to iconic imagery: for example, Donald Trump raising his fist after the assassination attempt in 2024 did not remain a singular record of a specific moment. It was rapidly iconized, becoming a visual type for a broader narrative, like defiance, resilience, “leadership under attack”, national redemption, etc. On the other side, generic or even non-pertinent images become fungible as quasi-evidence: the same image of a road full of walking immigrants illustrates but also visually demonstrates every instance of a refugee crisis. What matters is less the image’s strict probative link to a specific event than its capacity to function as an index-like support for a position. The observer here does not need to believe that the depicted scene is literally true; it is often sufficient that they accept it as a premise within a narrative, and that they are committed to publicly affirm it as a valid piece of information. The difference between believing, accepting and being committed to some “truth” will shortly be clarified in the context of this paper.

In the specific and contemporary case of AI-generated imagery, several features intensify this blurring between ideologically-laden use and evidentiary value. First, AI images produce a strong effect of realism: even when viewers can recognize artifacts or suspect synthesis, the output reproduces the perceptual grammar of camera media closely enough to activate a default ‘witnessing’ stance. A reflective judgment (“this is AI-generated”) does not necessarily cancel the perceptual effect produced by camera-like simulated photorealism. In other words, skepticism can coexist with a residual “witnessing mode” in which the image is stored and circulated as if it were a view onto a scene. Even when viewers explicitly say “it’s fake”, the visualization can still function as evidence of plausibility, and plausibility can slide into prevalence (“this sort of thing is everywhere”), supporting generalized accusations and threat narratives. Moreover, a well-known cognitive pattern is that content is retained more robustly than source. When an image is encoded in a witnessing mode, the likelihood that it will later be recalled as “something seen” increases.

Moreover, generative systems tend to draw on general stereotypes and culturally typical templates, since they are trained on large-scale visual archives and optimized for legibility: therefore, their outputs often look immediately familiar and thus readily classifiable within existing political typologies. This stereotypicality is frequently amplified through mechanisms of essentialization: generative images do not merely depict individuals and situations, but push them toward salient, condensed, and emotionally legible cues. Moreover, AI imagery often presents itself as impersonal. The weakening of specific authorial reference in AI-generated content can increase its apparent neutrality and reduce interpretive resistance. In practice, the loss of a concrete author can paradoxically strengthen the image’s claim to be “just showing” a state of affairs, and thus intensify its communicative force as a particular kind of assertion.

The argument developed in the following sections builds on these observations. First, I briefly show that the epistemic and evidentiary value of images, especially photographic images, has long been contested and has repeatedly generated pragmatic tensions. Second, I characterize polarized epistemic environments as contexts in which belief is often displaced by acceptance and public commitment, producing what I have elsewhere called “staged belief” (Arielli 2024). Third, I analyze misattributed and recycled photographs as instances of “emblematic evidence” (Arielli 2019), meaning images that function simultaneously as illustrative emblems and as evidence-shaped proof, drawing on the documented tendency of photographs to inflate perceived truth even when they are non-probative (Newman et al. 2012). Fourth, I introduce the concept of “synthetic indexicality” to explain how AI-generated images intensify this mechanism by preserving indexical feeling while being clearly fabricated. Finally, I connect synthetic indexicality to debates on platform realism (Meyer 2025a and Meyer 2025b) to clarify why generative imagery is particularly compatible with political communication organized around threat and polarization.

Varieties of photographic deception

Images, like other sign systems, can be used in several ways. They can be deployed to support a thesis, to illustrate an idea, to elicit an affective or aesthetic response, to consolidate a myth, or to establish a fact. In many cases, the image’s function is not epistemic at all. It may be social, expressive, ritual, or identity-marking. The relevant question is therefore primarily pragmatic rather than ontological. What an image is taken to be doing depends on shared conventions between those who produce and circulate it and those who receive it. One of these shared assumptions is that photography and video have been widely understood as indexical media. Following the traditional Peircean framing (Peirce 1931-1958), they are thought to bear a physical, causal connection to a particular situation that occurs at a time and a place. This has been repeatedly rearticulated in twentieth-century theories of photography. Roland Barthes famously treated the photograph as a privileged case of indexical reference, stressing its “having-been-there” character and the way it compels the viewer to confront the pastness of the depicted event (Barthes [1980] 1981). A related, but more explicitly epistemological claim is Kendall Walton’s thesis of photographic transparency: in standard viewing conditions, photographs function less like pictures we merely interpret and more like perceptual access to their subjects, as if we were literally seeing through the image to the world (Walton 1984). Together, these accounts help explain why camera images are routinely seen as trace-like and as a direct source of evidence.

However, the idea that indexical images automatically function as factual proof without remainder has always rested on unstable ground (Doane 2007; Gunning 2004; Mitchell 1992). From its inception, photographic evidentiary authority has depended on contextual and institutional support that stabilize provenance, sequencing, framing, and interpretation (Mnookin 1998; Tagg 1988). The history of photography is also a history of contested proof. It is not simply that photographs can be falsified. It is that their epistemic value is not self-contained, but it is socially negotiated and constantly contestable. This is why the contemporary panic about synthetic media, or so-called “deepfakes”, while justified in many respects, should not be framed as the arrival of deception into an otherwise transparent visual regime.

In fact, at least four recurrent varieties of “photographic deception” are worth distinguishing (see also Allbeson, Allan 2018):

1) The first is “direct manipulation” of the image itself. Retouching, compositing, erasing, darkroom alteration, and now digital editing can create the appearance of an indexical trace that is not anchored in the depicted event. This is the clearest case of fabrication, because it simulates the trace-character of photography.

2) The second variety is “staging” of the depicted scene. A scene may be arranged, posed, or performed for the camera, while still being photographed through genuine optical registration. Here the deception lies not in the causal process of image formation but in the event that the camera registers.

3) The third variety is “misattribution” and recontextualization (Arielli 2019). A photograph can be authentic in itself and yet false in use, because it is presented as evidence for another event, another location or another time. The deception operates at the level of framing and captioning.

4) The fourth variety is interpretive distortion produced by ambiguity. Even when provenance is clear and no manipulation is involved, an image can be read in incompatible ways, and these readings can be strategically amplified. The same image becomes a battleground of inference. Photographs and videos rarely carry their meaning alone, they are fragments or traces, whose interpretation depends on background assumptions, testimony, presence of additional evidence. A still photograph or a short clip can invite sharply opposed interpretations once it is detached from reliable contextual information and from a shared account of what led to what. For example, the disputes around the killings of Renée Good and Alex Pretti in Minneapolis by ICE (Immigration and Customs Enforcement) agents in January 2026 show how competing camps can treat the same visual material as either evidence of defensive restraint or of reckless, excessive force, with official statements and public readings of the footage pulling in different directions. The epistemic vulnerability here is the product of images’ underdetermination.

The possibility of deception has often been used as an argument for denying the epistemic relevance of photographic evidence. A famous example is Richard Nixon’s reaction to the photograph known as Napalm Girl. According to audiotapes recordings, Nixon raised the possibility that the photograph had been staged or otherwise manipulated. The episode is instructive because it shows how quickly a camera image can be repositioned as suspect, even when it later becomes an iconic reference point. The line from “photographs can be faked” to “this photograph might be fake” can be drawn with minimal evidential work, especially when the stakes are political (Westrick 2019).

Precisely because photographic indexicality does not, by itself, settle questions of provenance, sequencing, or meaning, photographs acquire evidentiary force only within an external scaffolding of authentication and corroboration: trusted sources, aligned timelines, testimonial networks, and additional traces that stabilize what the image is of and how it came to circulate as “proof”. From the legal perspective, courts make this dependence explicit by treating many photographs as demonstrative aids that merely illustrate witness accounts, and by granting them substantive probative status only when reliability is independently anchored through authentication procedures and additional evidence (Graham 2010). Seen from this angle, AI-generated photorealistic imagery is not a rupture in the history of visual deception. It is a qualitative and quantitative escalation of a long-standing condition. What changes is the scale, speed, and accessibility with which “proof-shaped” images can be produced and circulated. The most consequential feature of contemporary deepfakes is therefore not simply that they enable manipulated imagery and simulated indexicality, as we will clarify later, but that they make such manipulation cheap and routine, and in doing so reinforce what Chesney and Citron call the “liar’s dividend”: once synthetic media becomes a familiar possibility, it becomes easier to cast doubt on authentic images as well, fostering a generalized atmosphere in which visual evidence can be rejected on demand (Chesney, Citron 2019b).

Given these distinctions, there are at least three familiar ways in which a photographic image can be identified as fake. First, it may fail to look photographic in a strict sense, as happened with early generative AI-images or with imperfect photorealistic paintings, or present clear evidence of retouching. Second, the depicted scene may be judged implausible, for example a public figure in an implausible situation (e.g. the Pope posing and dressed like a rapper). Third, even when neither formal oddities nor implausibility in the content are present, an image can be classified as fake on the basis of background information, such as reliable reports of manipulation, knowledge that it was produced by an AI, or evidence that it was staged or decontextualized. Each of these aspects could be ambiguous and object of debate: many AI images look photographic while still carrying small cues that trigger suspicion. Some scenes might be improbable but not impossible (like a picture of Trump amid African-American supporters [Fig. 1]). And provenance information can itself be contested, especially in environments where trust is fragmented, such as in ideologically polarized contexts.

Epistemic stances and polarization

The general assumption about visual disinformation presupposes a deceptiveness model: a false image works when it is mistaken for an authentic photograph of the described event. But this is, at best, only one part of the mechanism. In polarized environments, images often remain pragmatically effective even when their status is contested, when they are publicly debunked, or when their artificial character is plain. In these circumstances, the epistemic uptake of images shifts away from true belief, understood as an inner conviction depending on reasons, and toward public stances that are more relevant in cases of ideological alignment: belief gives way to acceptance and commitment (Arielli 2024).

Belief is typically understood as a relatively involuntary disposition: one finds oneself convinced that p is true, and this conviction is in principle answerable to evidence. It is not something one can simply decide to adopt at will without falling into a form of doxastic voluntarism (that is, “deciding to believe” something: Williams 1973). Acceptance, by contrast, is a practical act: treating p as true for the purposes of reasoning, action, or coordination in a given context, even without (or despite) full conviction. To give an example, in legal contexts, a defense attorney may accept a client’s innocence as the premise of argument, while privately suspending belief or even harboring doubts. As Cohen argues, acceptance is not merely a weaker belief; it is a different attitude with a different function (Cohen 1992). Acceptance is particularly relevant in contexts where public communication is ideologically driven, since it is a stance we can take up and sustain in practice. In polarized political settings, it is not enough that a claim be accepted as a premise. What matters is that it is backed by a publicly recognizable posture of steadfastness: this is the case of being openly committed. Commitment is stronger than mere acceptance because it involves occupying, and being seen to occupy, the role of a believer. A committed speaker does not merely ask others to accept p for some limited purpose. They present p as something they stand behind, and they signal that they will not easily retreat from it. In this sense, commitment includes not only acceptance of p, but also the willingness to sustain a second-order stance: the acceptance of believing that p, or at least of being answerable as if one believed it. This is what we might call a form of staged or performed belief (Arielli 2024).

Polarized contexts are structurally conducive to the displacement of belief through one-sided acceptance and commitment because they corrode shared criteria for what counts as a good reason, a credible source, or an adequate refutation. What David Roberts popularized as “tribal epistemology” is precisely the condition in which standards of evidence become subordinated to group allegiance: information is evaluated primarily by whether it supports the tribe’s narrative (Roberts 2017). Similarly, Regina Rini calls this “partisan epistemology”: a systematic tendency to overweight testimony from perceived allies and underweight testimony from perceived opponents, generating predictable asymmetries in what different groups treat as credible (Rini 2017). On the empirical side, cultural-cognition research has documented “identity-protective cognition”, a pattern in which people selectively credit or dismiss evidence in ways that protect their standing within an identity-defining group. The common denominator is that the function of public communication shifts: it is less a mechanism for updating beliefs in light of new evidence, and more a mechanism for expressing alignment, policing boundaries, and maintaining a shared moral and explanatory frame.

In the case of visual communication, when an image circulates in a polarized environment, the question is often not whether it produces belief in a narrow epistemic sense, but whether it can be accepted and mobilized within a worldview. Acceptance here functions as a practical authorization: “Take this as showing what is going on”, “Treat this as confirming what we already know”. Commitment then enters at the level of public alignment. Sharing an image, endorsing it, or using it as a prompt for indignation is a performative act that signals an already established membership and reinforces a collective frame: there is no search for knowledge or truth, but on the contrary a search for evidence that reinforces an already existing truth. What is taken as true is the result of a collectively supported posture of acceptance and commitment within a shared form of life. In such conditions, visual material can generate belief-like effects without being truly believed, and this is the terrain on which misattributed photographs and AI-generated images as well become rhetorically powerful.

Emblematic evidence

In polarized epistemic environments, images are frequently received through an essentialist lens: singular occurrences are treated as manifestations of pre-existing truths, not as contingent events. The photograph, in this setting, confirms what is already taken to be the case. On this view, documentary accuracy is secondary. Even misattributed images, and sometimes outright manipulations, can still be treated as “evidence” because they are taken to disclose a general truth, rather than to document a particular occurrence. Moreover, there is robust experimental literature showing that photos inflate subjective truth (so-called “truthiness”) even when the images provide no evidence for the claim. According to this study, including nonprobative photographs, images that are related to a topic but provide no actual proof, significantly increased the likelihood that participants would believe a claim was true (Newman et al. 2012).

A notable example was the AfD (Alternative für Deutschland) communication during the 2017 German election campaign. The party circulated a social media ad featuring a close-up of a woman being harassed by men described as “Arabic-looking”. The image was overlaid with the provocative caption “Do you remember…? New Year’s Eve!” and the hashtag “#govote”, directly referencing the 2015/2016 mass harassment of women by men with immigrant backgrounds at the Cologne central station. However, the sources reveal that the advertisement was a significant decontextualization and manipulation of reality. The photograph was actually taken in 2011 during the Tahrir Square protests in Egypt, depicting the harassment of an American journalist. The ad was also the product of visual manipulation: to further distance the image from its original source, the face of the harassed woman was replaced with the portrait of a model. When accused of spreading “fake news”, an AfD spokesperson defended the ad by claiming the specific origin was irrelevant. He argued that the picture should be taken for its symbolic and illustrative value as a “hassled-woman-picture”, stating that what mattered most was “getting the right message over”.

This example illustrates the shift from a specific indexical document (a photo of a unique event in Cairo) to an iconic archetype that still retains the value of an indexical with evidentiary value (since it represents a real occurrence). For the target audience, the image stopped being a record of a specific 2011 event and instead became evidentiary confirmation of a perceived general phenomenon: “Arabic looking men against defenseless white women”. By ignoring the misattribution, the image was used to treat the events in Cairo and Cologne as essentially two occurrences of the same phenomenon, allowing a non-pertinent visualization to function as pseudo-evidence for the party's ideological narrative. In other words, in essentialist worldviews, they were both contingent manifestations of the same general phenomena and therefore one could be used as a way to showcase the other.

I propose to call this hybrid use “emblematic evidence” (or “illustrative proof”: Arielli 2019), an ambiguous status where images oscillate between their emblematic function (showing “what this kind of thing looks like”) and an evidentiary function (serving as if they were traces of “what happened here and now”). Photographic images are indexical traces that may later be generalized into symbols, or icons. One example we already mentioned is the image of Trump raising his fist after the assassination attempt. It did not remain a singular record of a specific moment, but rapidly iconized and then typified, becoming a visual type and a reusable schema for a broader narrative: defiance, resilience, “leadership under attack”, national redemption, etc. Emblematic evidence reverses this direction. In such cases, a generic or merely illustrative image, including manipulated or misattributed ones such as photographs from the Tahrir Square protests, is made to trigger a stereotype that proves the specific event at issue, for example the assaults in Cologne on New Year’s Eve 2015, since it highlights how the event is a manifestation of a universal phenomenon. In this way, an emblem is converted into quasi-evidence: it does not add information but dictates, in advance, how the event is to be read. What is at stake here is the slide from exemplification to quasi-proof: the representation becomes a cue about unrelated facts because the type is granted priority over the individual case.

This structure exemplifies an essentializing, archetypal hermeneutics: singular occurrences are treated primarily as instances of a prior type, while contingency and context recede. In philosophical terms, it aligns with a broadly Platonic model of intelligibility, where particulars matter insofar as they instantiate a general form. Mircea Eliade’s account of ‘mythic’ or archetypal temporality offers a close analogue: events acquire meaning and perceived reality to the extent that they are read as repetitions of exemplary models, whereas what is merely singular is treated as secondary and inessential (Eliade [1949] 1954). Something similar holds in contemporary polarization, except that the archetypes are political: “The violent foreigner”, “The corrupt elite”, “The deviant minority”, “The dangerous protester”, and so forth. Once an archetype is installed, it becomes plausible to treat any vivid visualization of it as a form of disclosure. The image is taken to make present what is always already there. A comparison with science clarifies both the attraction and the distortion involved. In experimental science, a single test can indeed function as evidence that generalizes beyond the specific trial, because the evidential logic presupposes the universality of natural laws and relies on controlled procedures that make results replicable and publicly checkable. Political emblematic evidence borrows just the form of this logic while substituting with “universals” concerning the essence of groups and social phenomena. The crucial point is that emblematic evidence is not reducible to lying or to simple error. The indexical bond to a specific time and place becomes secondary, and sometimes strategically dispensable, because what matters is the iconic confirmation of a worldview.

Synthetic Indexicality and AI images

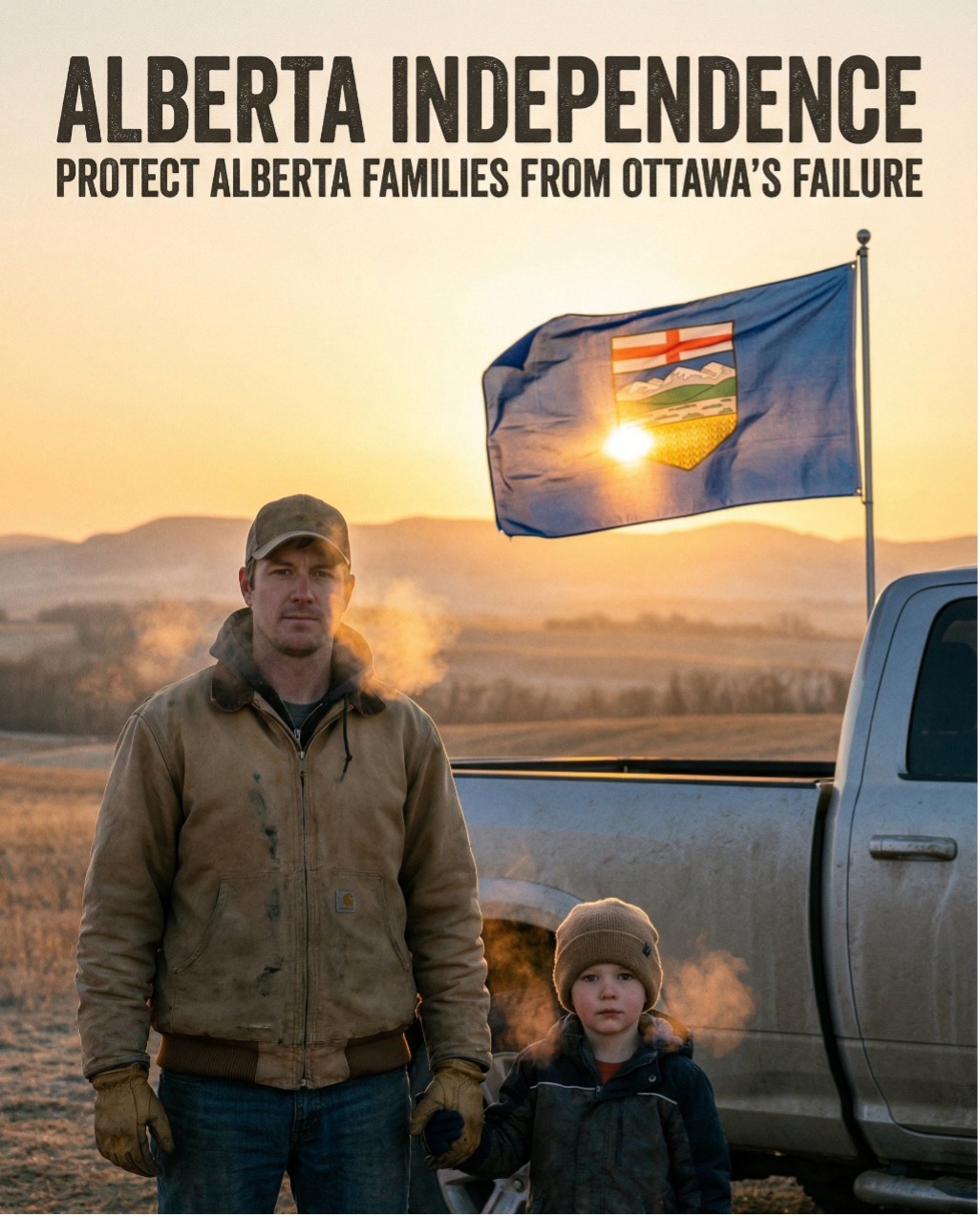

2 | AI-generated image from a site on Alberta’s independent movement.

3 | Photographic-looking AI-generated image that represents a dangerous foreigner presence in UK.

The crucial question concerning generative-AI is not whether AI images could be indistinguishable from photography. Often the capacity to distinguish fake from real photography depends on competence, attention, and viewing conditions. The same output can be judged real by one viewer and synthetic by another, and even a single viewer may shift judgment depending on how carefully they inspect the image. Moreover, as we argued in the previous sections, a forged image can be welcomed or rejected less on epistemic grounds than on ideological reasons, and a viewer’s prior commitments often determine whether doubt is activated (like in Richard Nixon’s suspicion that the Napalm Girl photo could have been fabricated).

Having said that, AI-generated images are effective in politically-laden communication for at least three reasons:

1) They often achieve striking visuals that preserve an appearance of photographic indexicality, and thus give an evidential feel, even when viewers recognize their artificiality;

2) AI-images appear to arise from systems whose output is presented as impersonal and quasi-neutral, since they stem from the huge archives of real photographic documents;

3) they present a hyper-real aesthetic (sometimes bordering to caricatural exaggeration) which is able to perceptually and cognitively convey specific imageries without the imperfection of real scenes, in other words, they are similar to “supernormal stimuli” where aesthetic amplification is impactful in the arena of provoking and redefining viewer’s imagination.

Concerning the first point (“evidential feel”), indexicality is not exclusively a feature of a photograph’s transparency, but it is also a stylistic feature: photorealism is the feel of indexicality, even in its absence. The impression of indexicality becomes a stylistic register based on a photographic grammar we are used to, a repertoire of perceptual cues and cultural conventions that invites a default stance of witnessing (certain lighting, depth of field, documentary framing, surveillance aesthetics, phone-video formats) and the feeling that something “happened there at that moment”. AI systems can reproduce that grammar well enough to activate the witnessing stance even when the observer is also aware of their artificiality. In general terms, this aesthetic can be described as a simulation or staging of indexicality. Even if a trained eye recognizes its artificiality, or we know from background information that the image was generated by AI, we still do not look at it as a drawing or a human-made graphic work, but instead as a window onto a specific scene (see for instance the image taken from a right-wing propaganda site on Alberta’s independent movement [Fig. 2]). The image appears indexical or transparent (in Walton’s sense), even though we do not believe in its transparency.

In other words, AI media reproduce the experience of indexicality without the fact of indexicality by parasitically inhabiting the aura of photography. Audiences that are aware of the artificiality of those images, still engage with them under a mode of “as-if” realism. They behave as if the depicted scenario were real for the purposes of argument, mobilization, and identity reinforcement. In the treatment of ideological assumptions (e.g., a “Great Replacement”, the “Deep state conspiracy”) they often refer to literal and tangible realities that simply lack a perfect photographic record. In this context, synthetic images become the “best available evidence” for a believed-but-unseen truth, filling the evidentiary gap with a proof that bears affective efficacy [Fig. 3].

Modern diffusion models are trained on billions of photographs. By doing so, they learn the statistical distribution of pixels that constitute the photographic look. This gives them a formal credibility that feels evidentiary, even when the viewer is consciously aware of the image’s artificiality. Moreover, the viewer's perceptual system, conditioned by a century of use of video and photographic images as undoubtedly indexical, spontaneously reads these formal cues as signs of witness. Finally, simulated photorealism supports a double stance that is extremely useful in polarized environments: audiences can treat the image as evidence-like for orientation (“This shows what is going on”) while denying full responsibility when challenged (“It’s just AI”, “Just an illustration”, “Just a meme”), more so if the image is clearly the product of generative-AI. This produces belief-like effects without accountability.

Concerning the second aspect (“apparent impersonality”), it is useful to recall André Bazin’s argument concerning photography’s “objective” nature. In his essay The Ontology of the Photographic Image (Bazin [1945] 1967), he claimed the photograph is an imprint of reality, like a death mask or a fingerprint. A photograph’s authority stems from the bracketing of human subjectivity. The camera is an automatic witness. AI imagery creates a similar rhetoric of authorless generation. The user provides a prompt, but the image is generated by the model. Outputs are easily framed as what the system neutrally produced, with agency distributed across prompts, datasets, and automated procedures. This diffusion of authorship can make ideological content appear less intentional and therefore less contestable, while simultaneously making accountability harder to assign. AI images’ specific visual details are not chosen by a human artist in the way a painter chooses each brushstroke. This creates a sense of objectivity, as if the image came out of the machine’s understanding of the world’s visual data. This apparent neutrality of the medium makes the documentary posture politically usable, since the image can function as evidence-like support for claims even when it is openly synthetic.

Concerning the third aspect (“hyper-real aesthetics”), AI-generated imagery usually exhibits a form of augmented and heightened realism. AI-images, even the most photorealistic ones, are not neutral or matter-of-fact, but are algorithmically enhanced and made more striking than ordinary photos, engineered to grab attention: colors are saturated; surfaces are almost too flawless; human figures are rendered according to conventions that match, with emblematic precision, the “types” they are meant to represent (the hypermasculine worker; the rugged farmer, the idyllic family; the “threatening” immigrant; the innocent child, and so on). Their poses are staged according to a dramaturgy that exceeds the prosaic banality of an ordinary photographic snapshot. A growing literature investigates the replication and amplification of representational bias by text-to-image models. Text-to-image models can amplify harmful representations even when not explicitly asked for, producing outputs that magnify violence and sexualization even when the input is relatively benign (Hao et al. 2024). More generally, analyses of toxicity and bias in variants of Stable Diffusion (the most used model in AI-image generation) indicate that problematic content and biased associations are not exceptional instances but rather typical behaviors that can be induced under typical prompting conditions (Schneider, Hagendorff 2025).

In this regard, media scholar Roland Meyer identifies two key properties of such AI images: first, they function as “cliché amplifiers”, statistically extracting and intensifying stereotypes from their training data (Meyer 2025a). In practice, generative models recombine familiar visual tropes and icons into an idealized average of conventional representations, reinforcing what is already popular or recognizable. Second, they embody a populist aesthetic or “platform realism”, meaning the visuals are fine-tuned to fulfill immediate, visceral expectations of viewers (Meyer 2025b). Through user feedback loops (likes, shares, click-throughs), the models optimize outputs that feel emotionally real and ideologically reassuring to a target demographic. Indeed, the prevailing aesthetic is calibrated to the tastes of those most active online (predominantly young, white, male Western audiences) whose biases and engagements effectively shape the model’s sense of “What is real”. This creates a feedback cycle: images that confirm biases or elicit strong emotional reactions gain more engagement, and those signals of engagement in turn guide the generative model’s future outputs. The result is a self-reinforcing loop of credibility, whereby what looks real (in the sense of confirming what the target audience already considers real) is increasingly determined by what aligns with platform metrics and ingrained cultural clichés. In effect, AI imagery leverages in the visual domain what ethologist Niko Tinbergen famously termed “Supernormal stimulus”, namely exaggerated cues that provoke outsized instinctive responses (Tinbergen 1951, see also Arielli 2022). Supernormal stimuli could be artificially engineered amplifications of ordinary cues, calibrated to seize attention (see Barrett 2010). Think of unnaturally hypersexualized bodies in fashion magazines, or of commodities advertised through photographs that look better than the real object. Attention capture is the first step: the hyperreal image tends to displace, in the observer’s imagination, the object it purportedly represents, in line with the simulacral logic Jean Baudrillard notoriously analyzed. The perfected, intensified, more seductive representation becomes a stand-in for the real and is apprehended as reality in its own right. The manufactured image, by hijacking the subject’s perceptual and cognitive mechanisms, becomes evidence of how things are not despite its enhanced artificiality, but precisely because of it.

Conclusion

The rise of AI-generated and manipulated imagery could mark a further step in a broader process of epistemic corrosion, in which the evidentiary authority historically associated with photography is increasingly superseded by a tribalized logic of communication. In this environment, the observer’s stance often shifts from genuine belief toward strategic acceptance or performative commitment, treating images as emblematic evidence that confirms pre-existing ideological narratives rather than documenting specific, singular events. By leveraging synthetic indexicality and providing supernormal stimuli, generative AI intensifies this process, producing hyper-legible visualizations that route attention toward visceral, affective registers rather than reflective scrutiny. This facilitates a transition from a search for empirical truth to a struggle over imaginaries, where the informational fog and the “liar’s dividend” undermine shared procedures for authenticating reality.

In addition to the three points mentioned earlier (indexicality effect, appearance of impersonality, simulacra that amplify clichés), one should add the quantitative superiority of AI image production: images can be generated rapidly, at scale, and in some cases through automated pipelines that circulate content across digital media. In this sense, AI-generated imagery functions as a tool to establish, through sheer preponderance, the specific imagery that takes hold in viewers’ heads. Testimonial imagery is numerically overwhelmed in this respect by the overproduction of synthetic images. The struggle, then, concerns the capacity to dictate modes of imagination and perceptual expectation regarding social and political facts. It is not primarily a matter of bearing witness to reality, but of shaping how reality is to be seen and thought, constructing what becomes possible, plausible, and even inevitable to think. This can be described as a politics of anticipatory imagery: Donatella Della Ratta (Della Ratta 2025) captures this point when she examines how generative AI can operate as a tool of “speculative violence” by visually normalizing and prefiguring radical political agendas. This is especially effective for agendas that depend on pre-emptive fear and exceptional measures (border militarization, mass surveillance, collective punishment): the images condition publics to accept controversial policies by making them appear both plausible and unavoidable. By rendering the previously unthinkable in a polished, aesthetic format, these fabrications can bypass familiar interpretive filters and lodge themselves within collective repertoires of expectation. Therefore, the use of AI images does not merely aim to replace or mimic the tradition of indexical documentation that testifies to “how things are.” It functions as a versatile instrument of ideological communication oriented toward determining “how we should see things”, fusing factual perception with ideologically loaded imaginaries.

Bibliographical references

- Allbeson, Allan 2019

T. Allbeson, S. Allan, The war of images in the age of Trump, in C. Happer, A. Hoskins, W. Merrin (eds.), Trump’s Media War, Basingstoke 2019, 69-84. - Arielli 2019

E. Arielli, The polarized image: between visual fake news and “emblematic evidence”, “Politics and Image” 6 (2019), 23-35. - Arielli 2022

E. Arielli, Perfezionamento del sensibile, in D.M. Gagliardi (a cura di), Superstimolo: Come il cervello partecipa all’opera d’arte, Roma 2022, 48-70. - Arielli 2024

E. Arielli, The Theatrics of Believing. Between Fiction and Epistemic Commitment, “Paradigmi. Rivista di critica filosofica” 2 (2024), 279-294. - Barrett 2010

D. Barrett, Supernormal Stimuli: How Primal Urges Overran Their Evolutionary Purpose, New York 2010. - Barthes [1980] 1981

R. Barthes, Camera lucida: Reflections on photography [La chambre claire. Note sur la photographie, Paris 1980], trans. R. Howard, New York1981. - Bazin [1945] 1967

A. Bazin, The ontology of the photographic image [Ontologie de l’image photographique, “Les Temps modernes” 1 (October 1945), 4-10], trans. H. Gray, in Id., What is cinema?, Berkeley 1967, 9-16. - Chesney, Citron 2019a

R. Chesney, D. Citron, Deepfakes and the New Disinformation War: The Coming Age of Post-Truth Geopolitics, “Foreign Affairs” 98, 1 (2019), 147-155. - Chesney, Citron 2019

R. Chesney, D. Citron, Deep fakes: A looming challenge for privacy, democracy, and national security, “California Law Review” 107, 6 (2019), 1753-1819. - Cohen 1992

L.J. Cohen, An essay on belief and acceptance, Oxford 1992. - Della Ratta 2025

D. Della Ratta, Speculative violence as IdeoShock: How AI visually scripts the unthinkable, Verso Blog, 15 July 2025. - Doane 2007

M.A Doane, The indexical and the concept of medium specificity, “Differences. A Journal of Feminist Cultural Studies” 18, 1 (2007), 128-152. - Eliade [1949] 1954

M. Eliade, The Myth of the Eternal Return: Or, Cosmos and History [Le mythe de l’éternel retour: Archétypes et répétition, Paris 1949], trans. W.R. Trask, Princeton 1954. - Graham 2010

M.H. Graham, Real and Demonstrative Evidence, Experiments and Views, “Criminal Law Bulletin” 46, 4 (1 September 2010), 792, 2010. - Gunning 2004

T. Gunning, What’s the point of an index? Or, faking photographs, “Nordicom Review” 25, 1-2 (2004), 39-49. - Hao et al. 2024

S. Hao, R. Shelby, Y. Liu, H. Srinivasan, M. Bhutani, B. Karagol Ayan, R. Poplin, S. Poddar, S. Laszlo, Harm amplification in text-to-image models, “arXiv” 2402.01787 (15 August 2024). - Keyes 2004

R. Keyes, The post-truth era: Dishonesty and deception in contemporary life, New York 2004. - McIntyre 2018

L. McIntyre, Post-truth, Cambridge (MA) 2018. - Meyer 2025a

R. Meyer, Echte Emotionen. Generative KI und rechte Weltbilder, “Geschichte der Gegenwart” 2 Februar 2025. - Meyer 2025b

R. Meyer, “Platform Realism”: AI Image Synthesis and the Rise of Generic Visual Content, “Transbordeur” 9 (2025), 1-19. - Mitchell 1992

W.J. Mitchell, The reconfigured eye: Visual truth in the post-photographic era, Cambridge (MA) 1992. - Mnookin 1998

J.L. Mnookin, The image of truth: Photographic evidence and the power of analogy, “Yale Journal of Law & the Humanities” 10, 1 (1998), 1-74. - Newman, Garry, Bernstein, et al. 2012

E.J Newman, M. Garry, D.M. Bernstein, et al., Nonprobative photographs (or words) inflate truthiness, “Psychon Bull Rev” 19 (2012), 969-974. - Peirce [1931-1958]

C. Hartshorne, P. Weiss, A.W. Burks (eds.), Collected Papers of Charles Sanders Peirce, 8 vols, Cambridge (MA) 1931-1958. - Rini 2017

R. Rini, Fake news and partisan epistemology, “Kennedy Institute of Ethics Journal” 27, 2 (2017), E43-E64. - Roberts 2017

D. Roberts, Donald Trump and the rise of tribal epistemology, “Vox” 19 Mary 2017. - Schneider, Hagendorff 2025

M. Schneider, T. Hagendorff, Investigating toxicity and Bias in stable diffusion text-to-image models, “Sci Rep” 15, 31401 (2025). - Stalnaker 1984

R. Stalnaker, Inquiry, Cambridge (MA) 1984. - Tagg 1988

J. Tagg, The burden of representation: Essays on photographies and histories, Amherst 1988. - Tuomela 2000

R. Tuomela, Belief versus Acceptance, “Philosophical Explorations” 2 (2000), 122-137. - Walton 1984

K.L. Walton, Transparent Pictures: On the Nature of Photographic Realism, “Critical Inquiry” 11, 2 (1984), 246-277. - Wardle, Derakhshan 2017

C. Wardle, H. Derakhshan, Information disorder: Toward an interdisciplinary framework for research and policymaking, Strasbourg 2017. - Westrick 2019

S. Westrick, The Napalm Girl Matters more Today than Ever Before, “Environmental Journalism” (September 2019). - Williams 1973

B. Williams, Deciding to believe, in Id. (ed.), Problems of the Self: Philosophical Papers 1956-1972, Cambridge 1973, 136-151. - Wray 2001

K. Wray, Collective Belief and Acceptance, “Synthese” 129 (2001), 319-333.

Abstract

Emanuele Arielli’s paper examines how falsified, AI-generated, or misattributed images function not as failures of reference but as tools of symbolic consolidation. In contemporary far-right and nationalist propaganda, stock photos, memes, and recycled or synthetic imagery are used to construct visual archetypes that embed themselves in collective imagination beyond factual accuracy. Rather than treating such images merely as deceptive or epistemically flawed, the article introduces the concept of emblematic evidence: images that, despite being false or decontextualized, are perceived as legitimate referents for ideological narratives. They give visual form to beliefs already assumed to be true—less images pointing to truth than truths seeking images. In this sense, they ritualize belief, turning images into markers of ideological belonging rather than referential proof. This reversal reflects a broader epistemic shift from verification to affective identification. By detaching images from their indexical referents, they circulate more freely as mythic condensations of polarized worldviews. Crucially, their falsity is often performatively displayed: epistemic inaccuracy becomes not a flaw but a feature, reinforcing political identity by signaling distance from mainstream regimes of truth.

keywords | Emblematic evidence, Image manipulation, Misattribution, Epistemic accuracy.

questo numero di Engramma è a invito: la revisione dei saggi è stata affidata al comitato editoriale e all'international advisory board della rivista

Per citare questo articolo / To cite this article: E. Arielli, Not True, but Right. Falsity and Inaccuracy as Political Visual Strategy, “La Rivista di Engramma” n. 233 (aprile 2026).